As the world's science and technology develops towards intelligence and high efficiency, Lidar Laser Module and its applications have also received more and more attention. However, people also have some misunderstandings about lidar technology and performance. This article will reveal five common misunderstandings about lidar.

1. The technology of lidar application is complex

Although lidar is a complex sensor made up of different hardware, its basic working principle is actually quite simple. The sensor uses a time-of-flight method, a detection principle similar to bats using sound waves or radar using microwaves.

If we break down the sensor into its components, namely the laser, detector and beam deflection unit, lidar is no longer a daunting technology. The laser source first emits laser pulses. These pulses are deflected into the scene through micro-galvanometers. The detector detects the reflected Q light and accurately calculates the distance based on the laser pulse emission time and return time.

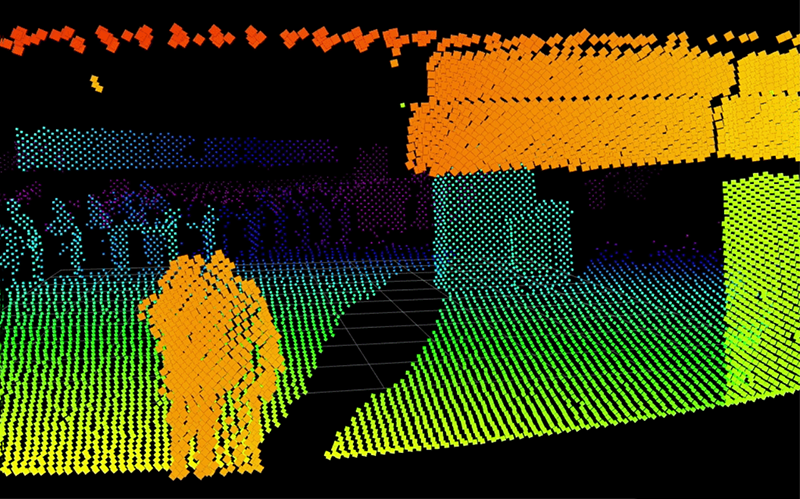

This process is repeated thousands or even millions of times per second to generate accurate 3D environment point clouds in real time. These 3D point cloud data are easy to analyze and exploit, for example, for autonomous driving decision-making.

The technology developed after the invention of pulsed lasers in the early 1960s, which emit repeated pulses of light rather than using continuous waves.

⒉. In self-driving car applications, lidar is redundant

Elon Musk ignored the use of lidar in self-driving cars at a conference in 2019, an incident that has spawned many myths about lidar to date. He claims that lidar, aided by cameras and smart algorithms, is redundant and will always hold its ground.

Cameras apply different image recognition technologies to collect color visual images, but using only one camera can only capture 2D data, which can easily lead to visual illusions and distance misjudgments. There are tragic examples of these flaws being dangerous and sometimes fatal.

In contrast, lidar can reliably capture 3D data and accurately identify distances and object sizes.

Integrating accurate 3D lidar data helps the camera to still perceive the surrounding environment even when the camera is "blind". For example, the camera needs some time to adapt to light changes after exiting a tunnel.

Furthermore, the 2D images generated by the cameras may appear to be accurate enough to train self-driving car algorithms. But they still have many inaccuracies that reduce the accuracy of machine learning models and thus the vehicle's ability to sense, predict, and make decisions. Machine learning capabilities that facilitate autonomous driving need to be scalable and solve the "long tail problem." This means that it is not enough to cater for 95% of the scenarios faced by vehicles on the road. Machine learning-based autonomous driving capabilities must also target 5%. Training on tricky situations while continuously improving its performance requires a large amount of pure camera system data for training.

In contrast, lidar can provide more machine learning prediction models while generating higher-precision training data. Therefore, lidar is a necessary sensor for more reliable and robust autonomous driving systems.

3. Lidar can be completely replaced by other sensors

One of the most common misconceptions about lidar is that it can be replaced by a camera or radar sensor, a misconception that stems from a lack of understanding of how these sensor technologies classify objects in different ways. After understanding the different capabilities of these sensors and the types of data they produce, we will see how they complement each other in functionality. What the camera captures is a 2D image, providing grayscale or color information, texture and contrast. To further analyze this data, image recognition software is required. Because the camera uses a passive measurement principle, objects need to be illuminated for detection. Additionally, two or more cameras are required to create 3D images, as well as high computing power.

Radar star measurement three-dimensional information has extremely high accuracy in determining the distance and speed of objects. However, the resolution is low and they cannot accurately detect (on a centimeter scale) or classify objects.

LiDAR creates a point cloud from the collected three-dimensional data. Based on the shape and size of the point cloud, it can accurately detect objects and classify them into different categories, such as people, cars, buildings, etc.

LiDAR fills the gaps of other sensor technologies by collecting highly detailed and reliable three-dimensional information. It can detect and accurately classify targets in various environments, making it stand out among various types of sensors. Data from cameras can be used for deeper analysis, and range and speed data collected by radar can be verified with LiDAR for greater accuracy. This means that in the future all sensor-based applications will integrate cameras, radar systems, lidar and other sensors.

4. Lidar cannot work in harsh environmental conditions

Cameras cannot operate without sufficient ambient lighting, such as in automotive applications where the detection range of the camera can only reach the headlight range. In contrast, lidar has a detection range of hundreds of meters regardless of light intensity conditions because it relies on infrared laser beams rather than visible light. In other words, a self-driving car equipped with a lidar sensor can drive as smoothly in the dark as during the day, even if the headlights are turned off.

When it comes to harsh conditions like fog, rain or snow, LiDAR once again shows a clear advantage in performance and can make up for the shortcomings of other sensors (such as cameras) in the perception system.

Lidars often perform better than cameras in the rain because their beams are large. This allows the beam to bypass obstacles (such as raindrops) on the sensor mirror, so the lidar's range is not affected to a certain extent. In comparison, a camera's pixel size is much smaller than the size of a raindrop, so its view will be obscured.

The large beam also enables the lidar to detect multiple echoes from different ranges and process only the one with the strongest signal. This can also be useful in bad weather conditions, such as when it snows, as the lidar can ignore the impact of reflections from snowflakes. A camera without any machine learning algorithms cannot distinguish between snowflakes, wet lenses or hard objects, and ultimately returns a distorted image.

LiDAR also has shorter exposure times and shutter speeds (millionths of a second) than cameras (thousandths of a second), meaning raindrops are not detected as streaks spanning multiple pixels, but as raw shapes .

Since lidar is an optical device, its performance can also be negatively affected in conditions such as heavy fog, but it is still able to provide more valuable data than sensors such as cameras and can detect at longer distances.

5. Lidar sensors are expensive

There was a time when the only lidars available on the market were rotating lidars, which were very expensive and bulky and could not be produced in large quantities. So it's only natural that people still have misconceptions about lidar and its high price. But since the advent of MEMS (microelectromechanical systems) lidar, this statement has completely changed. MEMS components are made of silicon and are easily scalable for production, making them very cost-effective.

Solid-state LiDAR uses standard components and requires no regular maintenance, thus reducing costs. In recent years, the cost of these lidar sensors has dropped from thousands of dollars to hundreds of dollars, a trend that will continue in the future. In fact, mid-range sensors can even be sold for triple-digit prices when produced in high volumes.

These are some common misconceptions about lidar technology and its applications. In part two of this series, we'll uncover more misunderstandings about lidar that people are overlooking.

In many sensor-based applications, Lidar Laser Module Sensors are favored. Even though they are widely used, there are still some misunderstandings about lidar technology. In the first part, we have revealed six common misunderstandings, and now we will introduce the second part to you.

Myth 1: Lidar is the same as a camera when it comes to privacy protection

Unlike cameras, lidar does not record any color information. It captures only three-dimensional distance data to create point cloud Q, thereby generating an image of the entire scene while preserving privacy.

As the usage of sensors increases year by year, privacy issues have attracted much attention. For example, smart city projects around the world have caused so much controversy over data collection and use that the European Union has banned the use of facial recognition technology in public until government authorities introduce regulations and regulate its use.

The root cause of these problems is the ability of sensors to capture and store photos of people and then analyze facial data for identification. While the pros and cons of using surveillance and identification applications is indeed a fraught social dilemma, lidar technology can play a key role in protecting privacy.

Algorithms analyze point cloud data collected by lidar to accurately and reliably track pedestrians, vehicles and other objects. However, it does not record any color information, but only captures 3D distance data to create point clouds. The entire scene generated is anonymous, which is fully reflected in privacy-sensitive applications such as perimeter security detection, people flow management, and population counting. its value.

Myth 2: iPhone lidar has similar functions to conventional lidar

The type of sensor, scanning range, and resolution used by iPhone lidar determine that it cannot provide the same functionality as conventional lidar.

Apple's recently released iPhone 12 Pro and iPad Pro devices introduced lidar. iPhone lidar creates small-scale 3D depth maps of objects, people and their surroundings by emitting pulses of light waves. These points measure the distance between each other, creating a "dot field" and generating a grid of dimensions. How this works might sound familiar, and that's because it's basically an update to the TrueDepth camera facial recognition technology used in the past.

But are these lidar technologies the same as conventional lidar technologies? iPhone lidar uses flash illumination and scanless technology, and the entire field of view is detected using a wide pulse divergent laser beam. This is in stark contrast to conventional scanning lidar, which emits a collimated laser beam to capture only one point in the field of view at a time. Although conventional lidar also has flash lidar, that is, Flash lidar, the purpose of this article is to compare iPhone lidar and conventional scanning lidar.

The most significant difference between conventional scanning lidar and iPhone lidar is the detection range: lidar used in applications outside the consumer industry has higher detection range and resolution than iPhone lidar. For example, Hongke's Cube 1 lidar has a range of up to 250 meters, while the iPhone's lidar can only measure and analyze a distance of 5-10 meters.

The large-scale commercialization of lidar in the iPhone has attracted much attention to this technology and made more and more consumers familiar with and understand lidar technology. Just like cameras, it will also promote the development of the entire semiconductor ecosystem, lay a solid foundation for optical and electronics research, and will be more widely used in other industries. In fact, iPhone lidar does improve the camera's focusing speed and accuracy in low-light conditions. However, it currently cannot be applied to large-scale applications such as autonomous vehicles and high-precision maps.

In terms of resolution, a typical scanning lidar like the Cube 1 can generate over 500 scan lines per second, generating hundreds of thousands of data points, resulting in a very dense point cloud. In comparison, iPhone lidar can reportedly only measure up to 500 data points per frame and has lower resolution.

Myth 3: Lidar can damage human eyes

To ensure eye safety, all lidar products are manufactured in accordance with Level 1 eye safety standards (IEC 60825-1:2014).

Contrary to popular belief, lidar's impact on eye safety is based on a combination of factors, not just the wavelength of the laser. For example, the safety level of lidar depends largely on the peak power of the laser, which directly affects the sensor detection range at a specific wavelength. Under normal circumstances, the eyes are more sensitive to lasers with a wavelength of 9O5nm. Therefore, the peak power of this particular type of laser needs to be reduced to ensure eye safety.

In contrast, 1550nm wavelength lidar can use higher power and achieve longer-distance detection while ensuring human eye safety. This is because the cornea, lens, aqueous humor and vitreous humor of the eye can effectively absorb any light wave with a wavelength greater than 1400nm, thereby reducing the risk of retinal damage.

The Level 1 human eye safety standard (IEC 60825-1:2014) defines the peak power related to various wavelengths. Following the standard design, lidar can meet the requirements of human eye safety.

Regarding the hotly discussed topic on the Internet, at an intersection, multiple lidars emit light waves with the same wavelength and phase. Will the combination produce a higher energy laser? Will it cause harm to human eyes? In theory, these lasers can As superposition increases the amplitude, the peak power of the pulses also increases and may exceed eye safety standards. While this sounds like a bad thing, in the real world it's nearly impossible. This is because two lidar sensors must send a laser pulse at the same time, and the pulse width, divergence angle, and exposure direction perfectly coincide with the position of the human eye to produce high-energy laser light. This possibility is very small.

Myth 4: The application of lidar is very limited

From fleet management to agriculture, security, and smart city applications, there are no limits to the applications of lidar!

Although the integration of lidar into iPhones has begun to challenge this narrative, there are still people who misunderstand lidar and believe that it is somewhat a niche technology with limited applications. This may be because most of the mainstream media focuses on the application of lidar in the field of autonomous driving. Although this is indeed an application hot spot, they have many other applications, involving various industries.

For example, the application of lidar in the agricultural field. LiDAR can be installed on agricultural equipment and vehicles to achieve automatic control, environmental detection, sowing and fertilizing, etc.

Alternatively, lidar can be used in the security field for safety detection. Such as: perimeter security, entrance testing, checkpoint testing, and testing to maintain social distance during the epidemic, etc.

In smart city applications, urban congestion reduction, population counting, people flow management, etc. can be achieved.

The above are some misunderstandings that everyone may have about lidar and its applications. LiDAR will play a pivotal role in pioneering the future development of science and technology. As the world moves toward automation, LiDAR will become more widely used.

Contact information:

If you have any ideas, feel free to talk to us. No matter where our customers are and what our requirements are, we will follow our goal to provide our customers with high quality, low prices, and the best service.

Email:info@loshield.com

Email:info@loshield.com

Tel:0086-18092277517

Tel:0086-18092277517

![]() Fax: 86-29-81323155

Fax: 86-29-81323155

Wechat:0086-18092277517

Wechat:0086-18092277517